Register for free to join our community of investors and share your ideas. You will also get access to streaming quotes, interactive charts, trades, portfolio, live options flow and more tools.

Register for free to join our community of investors and share your ideas. You will also get access to streaming quotes, interactive charts, trades, portfolio, live options flow and more tools.

hehe...he's such a goof!!

Mr Bean - to the beach- presented to you by mr Bean Fan club

Pairing wine with fast food just as appropos as with fine dining, says expert

By Judy Creighton, THE CANADIAN PRESS

Pairing wine with fast food just as appropos as with fine dining, says expert

A bucket of Kentucky Fried Chicken suits Natalie MacLean just fine, thank you. It creates a challenge to do what she does best - pairing wines with food.

This internationally renowned wine aficionado and expert admits without abashment that she can't cook.

"But I've learned how to compensate for my lack of cooking skills by matching wines with every kind of meal, including fast food," says MacLean.

She and her family indulge in all sorts of ready prepared foods from dining out, takeout, TV dinners and deli stuff to canned beans and "even our son's mac and cheese, which by the way goes beautifully with a Chilean Chardonnay."

MacLean calls this pairing of wines with fast foods "shabby chic or like putting rhinestones on your jeans."

She stresses that the more important principle for her is that wine can go with all sorts of dishes.

"We don't have the wine culture that Europe does where they match wine with simple dishes, rustic dishes and everyday food," she points out. "We tend to think of wine as just for fancy meals and special occasions, but it is not."

"The same food-and wine-matching principles that you use for those fancy dishes can be applied to very basic fast food. It's about texture, weight and flavour. You are either complementing or contrasting."

So what would she drink with KFC?

"This a rich fatty dish, so to cut through the fat of the fried chicken you could choose a zippy off-dry Riesling," MacLean suggests. "Or a nice rich buttery Chardonnay from Chile or California because the fatness of the dish is going to marry with the fatness of the wine."

When sending out for Chinese food with its sweet and sour nuances, choose a wine that can handle both, she says.

"My favourite is off-dry Riesling from either Canada or Germany because it has a touch of sweetness, but it also has the acidity to go with the sour element in Asian cuisine."

And she adds that any wine that is bone dry is going to taste bitter with Chinese food.

With Indian food, it is very much the same principle, says MacLean because of the spiciness and "I would go with the low-alcohol white sparklers which have a little sweetness."

For wine drinkers who prefer reds over white "make sure you are choosing one that is not high in alcohol or tannins when eating spicy foods," she says.

"Go with fruity low-tannin reds like Pinot Noir, Beaujolais, Gamay or even Zinfandel."

Finally, MacLean deals with one of the most commonly ordered-in fast food - pizza.

"Pizza is easy," she says. "It is a classic match with Italian wines because two of the dominant elements are the cheese and tomato, so a lot of Italian reds have a good amount of mouth-watering acidity."

"That acidity cuts through the cheese and also matches the acidity in the tomatoes, so varieties such as Barberas and Chianti are wicked with pizza."

To assist wine drinkers, MacLean offers a free interactive matching tool on her website www.nataliemaclean.com/matcher.

"I believe that the old rules about white wine with white meat and red wine with red meat just don't give enough guidance any more."

http://ca.lifestyle.yahoo.com/food-entertaining/articles/drinks-desserts/cp/home_family-pairing_wine_with_fast_food_just_as_appropos_as_with_fine_dining_says_expert/print

Okay...just don't drop me :)

Lost in the woods? Expert advice on which worms to eat and leaves to lick

By Michael Oliveira, THE CANADIAN PRESS

Lost in the woods? Expert advice on which worms to eat and leaves to lick

TORONTO - If you're a city slicker lost this long weekend in the wilderness with no food or water, not to worry: There are more than enough leaves to lick and bugs to barbeque until you find your way back to paved civilization.

That's the advice of Rich Swift, an expert outdoorsman and owner of outdoor adventure store Algonquin Outfitters in Huntsville, Ont., who is bracing for a weekend rush of visitors to Ontario's pristine Algonquin Park wilderness in the heart of cottage country.

Swift doesn't typically recommend that people seek out insects to snack on, but in a serious moment of desperation, he said a lost camper should know which bugs can be protein-rich - and often quite tasty.

"My usual rule of thumb is any insect under an inch is safe to eat, anything over an inch I'd usually like to cook up a little bit so it gets rid of the bitterness," Swift said.

"With worms, they're kind of mushy, but if you put them over a rock on a nice sunny day they dry out and they'll actually almost be like a jerky."

Fried worms can also be quite nice, Swift insisted, and are an amazing source of protein if there's little else to eat.

"Worms are one of the highest sources of protein along with ants, ants pound for pound have more than most food sources you can find."

Berries are probably a little more palatable to most, although there's always the danger of picking one of the poisonous variety.

Swift also recommends doing some research before heading into the bush about which berries to avoid; almost all white and yellow berries aren't worth taking the chance.

"If you take those and put them right up to your lips, if it's tingling or it has a bitter taste to it, 90 per cent of the time it could be poisonous."

Even more important than finding food, said Swift, is finding water, since most people can't go any longer than three days without fluids.

Morning dew is drinkable, depending on where it collects, and a small but good source for a little sip is leaves from trees, he said.

Garbage bags or clean tarps can also be hung out over night to collect dew or rain.

You can also try looking downhill for a stream or a lake, although drinking out of either is risky and should only be done in an emergency situation.

"If you're in an extreme situation for survival, you're better off drinking water even if it potentially might have some parasites in it," he said.

But if you have a fire going - and of course Swift has some tips on how to easily start one - water should be boiled first to make sure it's safe to drink.

Magnesium strips can be purchased at most outdoors stores and make starting a fire much easier than rubbing two sticks together, he said.

Dryer lint or old-fashioned film canisters loaded with cotton balls and petroleum jelly also take the stress out of making a fire.

Another method - that only works with plenty of sun - is to use a pair of glasses, a piece of broken glass or a mirror to reflect the sunlight into some kindling, which should spark.

There's also the ever-present threat of bears in the wilderness, which Swift said are rarely a threat if treated with respect and distance.

"Less than one per cent of the people I ever send out on a canoe trip in Algonquin Park even see a bear, so if you do see a bear, first enjoy the experience - bears are really fascinating creatures," he said.

"The most important thing is never approach a bear. Always back away, keep in sight of the bear but always backwards. Never turn around and run."

The standard advice about keeping campsites clean and free of open food also stands, Swift added. Food should be packed tightly and stored somewhere secure, such as high in a tree, but never inside a tent.

"If a bear is mucking about a campsite and you feel uncomfortable about it, what I usually tell people to do is grab their pots, bang them together, make themselves look big and say, 'Go away, bear, Go away, bear."'

http://ca.lifestyle.yahoo.com/food-entertaining/articles/special-occasions/cp/home_family-lost_in_the_woods_expert_advice_on_which_worms_to_eat_and_leaves_to_lick/print

Cancer 'Cure' In Mice To Be Tested In Humans

ScienceDaily (June 30, 2008) — Scientists at Wake Forest University Baptist Medical Center are about to embark on a human trial to test whether a new cancer treatment will be as effective at eradicating cancer in humans as it has proven to be in mice.

The treatment will involve transfusing specific white blood cells, called granulocytes, from select donors, into patients with advanced forms of cancer. A similar treatment using white blood cells from cancer-resistant mice has previously been highly successful, curing 100 percent of lab mice afflicted with advanced malignancies.

Zheng Cui, Ph.D., lead researcher and associate professor of pathology, will be announcing the study June 28 at the Understanding Aging conference in Los Angeles.

The study, given the go-ahead by the U.S. Food and Drug Administration, will involve treating human cancer patients with white blood cells from healthy young people whose immune systems produce cells with high levels of cancer-fighting activity.

The basis of the study is the scientists' discovery, published five years ago, of a cancer-resistant mouse and their subsequent finding that white blood cells from that mouse and its offspring cured advanced cancers in ordinary laboratory mice. They have since identified similar cancer-killing activity in the white blood cells of some healthy humans.

"In mice, we've been able to eradicate even highly aggressive forms of malignancy with extremely large tumors," Cui said. "Hopefully, we will see the same results in humans. Our laboratory studies indicate that this cancer-fighting ability is even stronger in healthy humans."

The team has tested human cancer-fighting cells from healthy donors against human cervical, prostate and breast cancer cells in the laboratory -- with surprisingly good results. The scientists say the anti-tumor response primarily involves granulocytes of the innate immune system, a system known for fighting off infections.

Granulocytes are the most abundant type of white blood cells and can account for as much as 60 percent of total circulating white blood cells in healthy humans. Donors can give granulocytes specifically without losing other components of blood through a process called apheresis that separates granulocytes and returns other blood components back to donors.

In a small study of human volunteers, the scientists found that cancer-killing activity in the granulocytes was highest in people under age 50. They also found that this activity can be lowered by factors such as winter or emotional stress. They said the key to the success for the new therapy is to transfuse sufficient granulocytes from healthy donors while their cancer-killing activities are at their peak level.

For the upcoming study, the researchers are currently recruiting 500 local potential donors who are 50 years old or younger and in good health to have their blood tested. Of those, 100 volunteers with high cancer-killing activity will be asked to donate white blood cells for the study. Cell recipients will include 22 cancer patients who have solid tumors that either didn't respond originally, or no longer respond, to conventional therapies. The study will cost $100,000 per patient receiving therapy, and for many patients (those living in 22 states, including North Carolina) the costs may be covered by their insurance company. There is no cost to donate blood.

For more information about qualifications for donors and participants, go to http://www.wfubmc.edu/LIFT (Web site will be available the evening of 6/27.) Cancer-killing ability in these cells is highest during the summer, so researchers are hoping to find volunteers who can afford the therapy quickly.

"If the study is effective, it would be another arrow in the quiver of treatments aimed at cancer," said Mark Willingham, M.D., a co-researcher and professor of pathology. "It is based on 10 years of work since the cancer-resistant mouse was first discovered."

Volunteers who are selected as donors -- based on the observed potential cancer-fighting activity of their white cells -- will complete the apheresis, a two- to three-hour process similar to platelet donation, to collect their granulocytes. The cancer patients will then receive the granulocytes through a transfusion -- a safe process that has been used for more than 30 years. Normally, the treatment is used for patients who have antibiotic-resistant infectious diseases. The treatment will be given for three to four consecutive days on an outpatient basis. Up to three donors may be necessary to collect enough blood product for one study participant.

"The difference between our study and the traditional white cell therapy is that we're selecting the healthy donors based on the cancer-killing ability of their white blood cells," said Cui. The scientists are calling the therapy Leukocyte InFusion Therapy (LIFT).

The goal of the phase II study is to determine whether patients can tolerate a sufficient amount of transfused granulocytes for the treatment. Participants will be monitored on a regular basis, and after three months scientists will evaluate whether the treatment results in clear clinical benefits for the patients. If this phase of the study is successful, scientists will expand the study to determine if the treatment is best suited to certain types of cancer.

Yikong Keung, M.D., a medical oncologist, is the chief clinical investigator of the study. Gregory Pomper, M.D., assistant professor of pathology and the director of the Wake Forest Baptist blood bank, will oversee the blood banking portion of the study.

Adapted from materials provided by Wake Forest University Baptist Medical Center, via EurekAlert!, a service of AAAS.

Need to cite this story in your essay, paper, or report? Use one of the following formats:

APA

MLA

Wake Forest University Baptist Medical Center (2008, June 30). Cancer 'Cure' In Mice To Be Tested In Humans. ScienceDaily. Retrieved August 10, 2008, from http://www.sciencedaily.com /releases/2008/06/080628155300.htm

Tropical Anemia: One of Africa's Great Killers and a Rationale for Linking Malaria and Neglected Tropical Disease Control to Achieve a Common Goal

Peter J. Hotez1*, David H. Molyneux2*

1 Department of Microbiology, Immunology, & Tropical Medicine, The George Washington University and Sabin Vaccine Institute, Washington, D. C., United States of America, 2 Liverpool School of Tropical Medicine, Liverpool, United Kingdom

Citation: Hotez PJ, Molyneux DH (2008) Tropical Anemia: One of Africa's Great Killers and a Rationale for Linking Malaria and Neglected Tropical Disease Control to Achieve a Common Goal. PLoS Negl Trop Dis 2(7): e270. doi:10.1371/journal.pntd.0000270

Published: July 30, 2008

“Wiping out malaria would join the eradication of smallpox as one of the greatest accomplishments in human history. It is a goal we can achieve.”

Melinda Gates, co-founder,

Bill & Melinda Gates Foundation

With more than 1 million child deaths annually, malaria remains the single leading killer of young children in sub-Saharan Africa [1]. Millions more young children survive, but still suffer from severe anemia and permanent neurological damage [1], as well as more subtle neuropsychiatric disturbances including impaired cognition and memory [2]. Malaria in pregnancy is also a major cause of maternal deaths and low birth weight [3], and together these maternal and child health effects account for huge economic losses that trap families in poverty [4]. As a result, malaria is now considered one of the key forces preventing the development of the African continent [4]. In response to a growing malaria crisis, the Bill & Melinda Gates Foundation recently announced an ambitious program of expanded malaria control, with a long-term goal of malaria eradication [5]. The major elements of expanded malaria control include strengthening of prevention and treatment programs worldwide through the Global Fund to Fight AIDS, Tuberculosis and Malaria, the United States President's Malaria Initiative, the World Bank Malaria Control Booster Program, scale-up of national control programs [5], coordination through the Roll Back Malaria Partnership based at the World Health Organization (WHO) [6], and advocacy by Malaria No More and other organizations [7].

In the early 1970s, an intensified effort to interrupt the transmission of malaria was conducted in a group of villages near the town of Garki in northern Nigeria [8]. Through household spraying, mass drug administration, and other measures, there was a temporary reduction in malaria deaths, but overall the Garki Project showed that interrupting malaria transmission was not possible even when a full armamentarium of control tools was applied [8]. An important difference between then and now is the availability of long-lasting insecticide-treated nets (LLITNs) and artemisinin combination therapy (ACT)-based treatments, in addition to the increased willingness to deploy indoor insecticide spraying [1]. However, it is unclear whether even the deployment of these new control tools will directly lead to total success in malaria control because of the threat of emerging insecticide resistance to pyrethroids and the potential for emergence of artemisinin resistance [1],[9],[10]. Also, parallel efforts will be required to strengthen Africa's weakened health systems [11],[12], which today suffer from widespread malaria misdiagnoses in endemic areas [13] and a lack of access to essential medicines and LLITNs [14]. Accordingly, WHO and other organizations are embarking on renewed efforts to strengthen health systems in Africa and elsewhere [15], while product development partnerships have evolved in a concerted push to accelerate the development of additional new malaria drugs and insecticides, and safe and effective anti-malaria vaccines [1],[5],[16].

There is yet another promising, low-cost and highly cost-effective, and complementary approach for potentially reducing the morbidity of malaria in sub-Saharan Africa, which builds on existing efforts and could be implemented for as little as US$0.50 per person per year or less than 10% add-on to projected malaria control costs [17]–[20]. In sub-Saharan Africa, where more than 90% of malaria deaths occur, children and pregnant women are simultaneously infected with both malaria and a group of other parasitic diseases, known as the neglected tropical diseases (NTDs). The major NTDs in sub-Saharan Africa include hookworm infection (198 million cases) and other soil-transmitted helminth infections such as ascariasis and trichuriasis (173 million and 162 million cases, respectively), schistosomiasis (166 million), trachoma (33 million), lymphatic filariasis (46 million), and onchocerciasis (18–37 million) [17],[18]. There is evidence that some of these NTDs exert an adverse influence on the clinical outcome of malaria in childhood and in pregnancy [21]–[24], and even possibly on malaria transmission [25]. Shown in Figure 1 is a previously published map demonstrating the geographic overlap and co-endemicity of falciparum malaria and hookworm infection (Africa's most common NTD), based on statistical and spatial analyses [22]. This analysis shows high spatial congruence of these two infections, with one quarter of all sub-Saharan African schoolchildren simultaneously at risk for hookworm and malaria. Almost all of the estimated 50 million schoolchildren in sub-Saharan Africa with hookworm infection are also at high risk for malaria, except in a small band of the Sahel where the climate is presumably too dry to support the larval development of hookworms [22]. A similar association has also been noted between malaria and schistosomiasis [21]. Therefore, early evidence points to high rates of malaria and NTD coinfections in sub-Saharan Africa, especially with hookworm infection and schistosomiasis.

Figure 1. Distribution of Hookworm and Malaria Coinfection.

Geographic overlap of moderate-high hookworm infection prevalence (greater than 20% prevalence of infection among school-aged children) and transmission of falciparum malaria transmission (based on a map of climactic suitability for Plasmodium falciparum malaria transmission, adjusted for urbanization). Modified from [22].

doi:10.1371/journal.pntd.0000270.g001

In Africa and other tropical developing countries, the great killer and disabler that results from malaria and NTD coinfections is anemia [23], [26]–[28]. Anemia accounts for up to one half of malaria deaths in young children [26], and is a leading contributor to the huge numbers of maternal deaths that result during pregnancy, as well as premature births [27]. Chronic anemia in young children is also associated with reductions in physical growth, and impaired cognition and school performance [23],[24]. Many of the NTDs, but especially two of the most common ones in sub-Saharan Africa, hookworm infection and schistosomiasis, cause anemia [24], [28]–[32], while in Asia (and presumably elsewhere), hookworm infection, schistosomiasis, and trichuriasis result in a synergistic anemia [33]. Malaria also causes severe anemia [26],[27], and in cases of malaria and NTD coinfection, anemia in vulnerable children and women develops through one or more of several mechanisms including blood loss, hemolysis, anemia of inflammation, and splenic sequestration [22]–[24], [28]–[32]. An important consequence of malaria and NTD coinfections is an enhancement in anemia, or what we have called previously “the perfect storm of anemia” [24]. For instance, in Kenya, hemoglobin concentrations were found to be 4.2 g/l lower among children harboring hookworm and malaria coinfections than in children with single-species infections [23]. Because hookworm and schistosomiasis are widespread in Africa [17],[21],[22], it is likely that these NTDs represent important contributors to the overall mortality from childhood malaria in this region [23],[24],[28].

Similarly, most of the 7.5 million pregnant women infected with hookworm likely live in areas of sub-Saharan Africa that place them at risk for malaria [27],[31]. At the same time, malaria control and NTD control have each been shown to reduce anemia both in children [23],[26],[34],[35] and in pregnant women [30],[31],[36],[37]. Therefore, combining malaria and NTD control practices in a unified anemia framework affords one of the best opportunities to reduce the huge burden of morbidity and mortality that results from anemia in sub-Saharan Africa. In addition to the health improvement that would result from anemia reduction, there is also some evidence that hookworm and schistosomiasis (and possibly other NTDs) may immunomodulate their human host and promote increased susceptibility to malaria, so that NTD control would work in synergy with nets and other measures to reduce malaria incidence [25].

In sub-Saharan Africa, there are several opportunities to link malaria and NTD control programs [23]. They include programs targeted for infants, preschool children, or school-aged children that employ intermittent preventive treatment (IPT), in which use of either sulfadoxine–pyrimethamine or ACT [38] would be supplemented with anthelminthic drugs (“deworming”) or with a rapid-impact package of NTD drugs that simultaneously target hookworm and other soil-transmitted helminth infections, lymphatic filariasis, onchocerciasis, and trachoma [17],[23],[24]. A joint program of malaria and NTD control could be incorporated as a new element of Integrated Management of Childhood Illness and other programs for children [39]. There are also opportunities for linking IPT in pregnancy with anthelminthic drugs (or the rapid-impact package) for NTD control, especially given the benefit of deworming in terms of improving birth outcome and reducing maternal morbidity and mortality [36],[37]. The anthelminthic drugs mebendazole and albendazole can be used in the second and third trimester of pregnancy and are recommended by WHO in the appropriate settings [40]. Community-based prevention efforts could also be integrated. Both untreated bed-nets and LLITNs are proportionately more effective in preventing lymphatic filariasis compared with malaria [41], and the use of bed-nets was shown to increase substantially, in some cases 9-fold, when used alongside NTD control efforts [42].

Together, malaria and the seven most common NTDs listed above cause almost 2 million deaths and are responsible for the loss of almost 100 million disability-adjusted life years (DALYs) annually (almost 20% higher than the disease burden from HIV/AIDS) [23]. Much of this high disease burden operates through the mechanism of anemia. According to J. Crawley, “…an integrated and non-disease specific approach is essential if the intolerable burden of anemia that currently exists in malaria-endemic regions of Africa is going to be reduced” [43]. Although there will be operational challenges to integrating NTD with malaria control, the opportunities for improving health, education, and economic development for the poorest people in sub-Saharan Africa are simply too great for us to ignore. Accordingly, the public–private partnerships of the Global Network for NTDs are working to identify opportunities for integration [18]. In addition, given the ability of hookworm and other NTDs to interfere with vaccine immunogenicity, we also need to consider the importance of NTD research for the malaria research agenda [44], as well as the opportunity to develop new NTD drugs and vaccines alongside new innovations in malaria treatment and prevention [45].

It is likely that focusing control efforts on malaria alone will thwart global efforts to sustain malaria control, much less achieve eradication. Ultimately, by reducing anemia in sub-Saharan Africa, linking the NTDs with malaria control would have a major impact on almost all of the Millennium Development Goals [20]. It is some four years since this approach was suggested [19], but policy makers are only gradually recognizing the benefits of more holistic approaches to tackling the diseases of the poor. An integrated control program for tropical anemia in Africa represents one of our better hopes for a quick win in the fight for sustainable disease control and poverty reduction.

References

1. Greenwood BM, Fidock DA, Kyle DE, Kappe SH, Alonso PL, et al. (2008) Malaria: Progress, perils, and prospects for eradication. J Clin Invest 118: 1266–1276. doi:10.1172/JCI33996. Find this article online

2. Kihara M, Carter JA, Newton CR (2006) The effect of plasmodium falciparum on cognition: A systematic review. Trop Med Int Health 11: 386–397. doi:10.1111/j.1365-3156.2006.01579.x. Find this article online

3. Rogerson SJ, Mwapasa V, Meshnick SR (2007) Malaria in pregnancy: Linking immunity and pathogenesis to prevention. Am J Trop Med Hyg 77: (Suppl 6)14–22. Find this article online

4. Sachs JD (2005) Achieving the millennium development goals—The case of malaria. N Engl J Med 352: 115–117. doi:10.1056/NEJMp048319. Find this article online

5. Bill & Melinda Gates Foundation (2007) Bill and Melinda Gates call for new global commitment to chart a course for malaria eradication. Available: http://www.gatesfoundation.org/GlobalHealth/Pri_Diseases/Malaria/Announcements/Announce-071007.htm. Accessed 2 July 2008.

6. Roll Back Malaria Partnership (2008) What is the Roll Back Malaria (RBM) Partnership? Available: http://rbm.who.int/aboutus.html. Accessed 2 July 2008.

7. Malaria No More (2008) About Malaria No More. Available: http://www.malarianomore.org/about.php. Accessed 2 July 2008.

8. Greenwood B (2004) The Garki project (box 8-1). In: Arrow KJ, Panosian CB, Gelband H, editors. Saving lives, buying time: Economics of malaria drugs in an age of resistance. The National Academies Press. Available: http://www.nap.edu/catalog.php?record_id=11017. Accessed 2 July 2008.

9. Casimiro SL, Hemingway J, Sharp BL, Coleman M (2007) Monitoring the operational impact of insecticide usage for malaria control on anopheles funestus from Mozambique. Malar J 6: 142. doi:10.1186/1475-2875-6-142. Find this article online

10. Noedl H (2005) Artemisinin resistance: How can we find it? Trends Parasitol 21: 404–405. doi:10.1016/j.pt.2005.06.012. Find this article online

11. Breman JG, Alilio MS, Mills A (2004) Conquering the intolerable burden of malaria: What's new, what's needed: A summary. Am J Trop Med Hyg 71: (Suppl 2)1–15. Find this article online

12. Webster J, Lines J, Bruce J, Armstrong Schellenberg JR, Hanson K (2005) Which delivery systems reach the poor? A review of equity of coverage of ever-treated nets, never-treated nets, and immunisation to reduce child mortality in Africa. Lancet Infect Dis 5: 709–717. doi:10.1016/S1473-3099(05)70269-3. Find this article online

13. Amexo M, Tolhurst R, Barnish G, Bates I (2004) Malaria misdiagnosis: Effects on the poor and vulnerable. Lancet 364: 1896–1898. doi:10.1016/S0140-6736(04)17446-1. Find this article online

14. Lufesi NN, Andrew M, Aursnes I (2007) Deficient supplies of drugs for life threatening diseases in an African community. BMC Health Serv Res 7: 86. doi:10.1186/1472-6963-7-86. Find this article online

15. World Health Organization (2007) Everybody's business: Strengthening health systems to improve health outcomes. WHO's framework for action. Available: http://www.who.int/healthsystems/strategy/everybodys_business.pdf. Accessed 3 July 2008.

16. Hemingway J, Beaty BJ, Rowland M, Scott TW, Sharp BL (2006) The innovative vector control consortium: Improved control of mosquito-borne diseases. Trends Parasitol 22: 308–312. doi:10.1016/j.pt.2006.05.003. Find this article online

17. Molyneux DH, Hotez PJ, Fenwick A (2005) “Rapid-impact interventions”: How a policy of integrated control for Africa's neglected tropical diseases could benefit the poor. PLoS Med 2: e336. doi:10.1371/journal.pmed.0020336. Find this article online

18. Hotez PJ, Molyneux DH, Fenwick A, Kumaresan J, Ehrlich Sachs S, et al. (2007) Control of neglected tropical diseases. New Engl J Med 357: 1018–1027. Find this article online

19. Molyneux DH, Nantulya VM (2004) Linking disease control programmes in rural Africa: A pro-poor strategy to reach Abuja targets and millennium development goals. BMJ 328: 1129–1132. doi:10.1136/bmj.328.7448.1129. Find this article online

20. Sachs JD, Hotez PJ (2006) Fighting tropical diseases. Science 311: 1521. doi:10.1126/science.1126851. Find this article online

21. Sokhna C, Le Hesran JY, Mbaye PA, Akiana J, Camara P, et al. (2004) Increase of malaria attacks among children presenting concomitant infection by Schistosoma mansoni in Senegal. Malar J 3: 43. doi:10.1186/1475-2875-3-43. Find this article online

22. Brooker S, Clements AC, Hotez PJ, Hay SI, Tatem AJ, et al. (2006) The co-distribution of plasmodium falciparum and hookworm among African schoolchildren. Malar J 5: 99. doi:10.1186/1475-2875-5-99. Find this article online

23. Brooker S, Akhwale W, Pullan R, Estambale B, Clarke SE, et al. (2007) Epidemiology of plasmodium-helminth co-infection in Africa: Populations at risk, potential impact on anemia, and prospects for combining control. Am J Trop Med Hyg 77: (Suppl 6)88–98. Find this article online

24. Hotez PJ, Molyneux DH, Fenwick A, Ottesen E, Ehrlich Sachs S, et al. (2006) Incorporating a rapid-impact package for neglected tropical diseases with programs for HIV/AIDS, tuberculosis, and malaria. PLoS Med 3: e102. doi:10.1371/journal.pmed.0030102. Find this article online

25. Druilhe P, Tall A, Sokhna C (2005) Worms can worsen malaria: Towards a new means to roll back malaria? Trends Parasitol 21: 359–362. doi:10.1016/j.pt.2005.06.011. Find this article online

26. Korenromp EL, Armstrong-Schellenberg JR, Williams BG, Nahlen BL, Snow RW (2004) Impact of malaria control on childhood anaemia in Africa—A quantitative review. Trop Med Int Health 9: 1050–1065. doi:10.1111/j.1365-3156.2004.01317.x. Find this article online

27. Guyatt HL, Snow RW (2004) Impact of malaria during pregnancy on low birth weight in sub-Saharan Africa. Clin Microbiol Rev 17: 760–769. doi:10.1128/CMR.17.4.760-769.2004. Find this article online

28. Bates I, McKew S, Sarkinfada F (2007) Anaemia: A useful indicator of neglected disease burden and control. PLoS Med 4: e231. doi:10.1371/journal.pmed.0040231. Find this article online

29. Friedman JF, Kanzaria HK, McGarvey ST (2005) Human schistosomiasis and anemia: The relationship and potential mechanisms. Trends Parasitol 21: 386–392. doi:10.1016/j.pt.2005.06.006. Find this article online

30. Hotez PJ, Brooker S, Bethony JM, Bottazzi ME, Loukas A, et al. (2004) Hookworm infection. N Engl J Med 351: 799–807. doi:10.1056/NEJMra032492. Find this article online

31. Crompton DW (2000) The public health importance of hookworm disease. Parasitology 121: (Suppl)S39–S50. Find this article online

32. Stoltzfus RJ, Dreyfuss ML, Chwaya HM, Albonico M (1997) Hookworm control as a strategy to prevent iron deficiency. Nutr Rev 55: 223–232. Find this article online

33. Ezeamama AE, McGarvey ST, Acosta LP, Zierler S, Manalo DL, et al. (2008) The synergistic effect of concomitant schistosomiasis, hookworm, and trichuris infections on children's anemia burden. PLoS Negl Trop Dis 2: e245. doi:10.1371/journal.pntd.0000245. Find this article online

34. Mebrahtu T, Stoltzfus RJ, Chwaya HM, Jape JK, Savioli L, et al. (2004) Low-dose daily iron supplementation for 12 months does not increase the prevalence of malarial infection or density of parasites in young Zanzibari children. J Nutr 134: 3037–3041. Find this article online

35. ter Kuile FO, Terlouw DJ, Phillips-Howard PA, Hawley WA, Friedman JF, et al. (2003) Impact of permethrin-treated bed nets on malaria and all-cause morbidity in young children in an area of intense perennial malaria transmission in western Kenya: Cross-sectional survey. Am J Trop Med Hyg 68: (Suppl 4)100–107. Find this article online

36. Larocque R, Casapia M, Gotuzzo E, Gyorkos TW (2005) Relationship between intensity of soil-transmitted helminth infections and anemia during pregnancy. Am J Trop Med Hyg 73: 783–789. Find this article online

37. Christian P, Khatry SK, West KP Jr (2004) Antenatal anthelmintic treatment, birthweight, and infant survival in rural Nepal. Lancet 364: 981–983. doi:10.1016/S0140-6736(04)17023-2. Find this article online

38. Greenwood B (2006) Review: Intermittent preventive treatment—A new approach to the prevention of malaria in children in areas with seasonal malaria transmission. Trop Med Int Health 11: 983–991. doi:10.1111/j.1365-3156.2006.01657.x. Find this article online

39. Garg R, Lee LA, Beach MJ, Wamae CN, Ramakrishnan U, et al. (2002) Evaluation of the integrated management of childhood illness guidelines for treatment of intestinal helminth infections among sick children aged 2–4 years in western Kenya. Trans R Soc Trop Med Hyg 96: 543–548. Find this article online

40. Albonico M, Montresor A, Crompton DW, Savioli L (2006) Intervention for the control of soil-transmitted helminthiasis in the community. Adv Parasitol 61: 311–348. doi:10.1016/S0065-308X(05)61008-1. Find this article online

41. Manga L (2002) Vector-control synergies, between ‘roll back malaria’ and the global programme to eliminate lymphatic filariasis, in the African region. Ann Trop Med Parasitol 96: (Suppl 2)S129–S132. Find this article online

42. Blackburn BG, Eigege A, Gotau H, Gerlong G, Miri E, et al. (2006) Successful integration of insecticide-treated bed net distribution with mass drug administration in central Nigeria. Am J Trop Med Hyg 75: 650–655. Find this article online

43. Crawley J (2004) Reducing the burden of anemia in infants and young children in malaria-endemic countries of Africa: From evidence to action. Am J Trop Med Hyg 71: (Suppl 2)25–34. Find this article online

44. Druilhe P, Sauzet JP, Sylla K, Roussilhon C (2006) Worms can alter T cell responses and induce regulatory T cells to experimental malaria vaccines. Vaccine 24: 4902–4904. doi:10.1016/j.vaccine.2006.03.018. Find this article online

45. Hotez PJ, Ferris MT (2006) The antipoverty vaccines. Vaccine 24: 5787–5799. Find this article online

All site content, except where otherwise noted, is licensed under a Creative Commons Attribution License.

Copyright: © 2008 Hotez, Molyneux. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Funding: The authors received no specific funding for this study.

Competing interests: PJH is President of the Sabin Vaccine Institute. He is an inventor on two international patents on hookworm vaccines. PJH and DHM are co-founders of the Global Network for Neglected Tropical Disease Control.

* E-mail: mtmpjh@gwumc.edu or photez@gwu.edu (PJH); david.molyneux@liverpool.ac.uk (DHM)

http://www.plosntds.org/article/info%3Adoi%2F10.1371%2Fjournal.pntd.0000270

Curved electronic eye created

Published online 6 August 2008 | Nature | doi:10.1038/news.2008.1004

Flexible circuits should lead to diverse imaging applications.

Katrina Charles

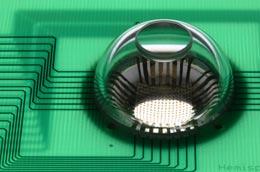

An eye-shaped camera made from a flexible mesh of silicon light-detectors marks a significant step towards creating a 'bionic' eye, its inventors say.

A single lens, mounted on top of a transparent cap, focuses light onto the flexible electronic circuit beneath.John Rogers/Nature

Conventional cameras use a curved lens to focus an image onto a flat surface where the light is captured either by film or by digital sensors. However, focusing light from a curved lens onto a flat surface distorts the image, necessitating a series of other lenses that reduce the distortion but tend to increase the bulk and cost of a device.

By contrast, human eyes require only a single lens and avoid much of this distortion, because the image is focused onto the curved surface at the back of the eyeball. John Rogers, a materials scientist at the University of Illinois at Urbana-Champaign, and his colleagues have taken inspiration from our own eyes to create an electronic version1.

It's a problem that many researchers have worked on over the last few decades. The key hurdle has been the rigidity of established electronic materials, which fracture when bent.

Flexible scaffold

The team's solution was to use a series of silicon photodetectors (pixels) connected by thin metal wires. This network is supported and encapsulated by a thin film of polyimide plastic, allowing the flexible scaffold to bend when compressed. This scaffold takes up the mechanical stress and protects the pixels as the array takes its hemispherical shape.

The team made a hollow dome about 2 centimetres wide from a rubber-like material called poly(dimethylsiloxane). They flattened out the stretchy dome, and attached the electronic mesh. Then, as the hollow dome snapped back into its original shape, it pulled the array with it, forming a hemisphere that could be attached to a lens; the basis of the camera

“The ability to wrap high quality silicon devices onto complex surfaces and biological tissues adds very interesting and powerful capabilities to electronic and optoelectronic device design,” says Rogers. "It allows us to put electronics in places where we couldn't before."

The research was described as a “breakthrough” by Dago de Leeuw, a research fellow with Philips Research Laboratories based in Eindhoven, the Netherlands. The technique could work for any application where you would want to have stretchable electronics, and is limited only by what sensors you can add to the array.

Higher resolution

To improve the camera’s resolution, the researchers have also experimented with another of nature's designs. The constant motion of the human eye means that we get many views of an object, which we automatically combine to give us a better picture of what we’re looking at, explains Rogers. So his team has done the same, taking several images with their camera at slightly different angles and then combining them with computer software to give a much sharper image.

Takao Someya of the University of Tokyo, Japan, who also works on stretchable electronics, says that the camera marks a great advance in the field of stretchable electronics, with potential applications including bionic implants, robotic sensory skins and biomedical monitoring devices2.

At the moment, the camera is limited to 256 pixels, but this could be easily scaled up, says Rogers. Advantageously, this electronic eye camera exploits technology that already exists, so facilities currently fabricating planar silicon devices should be able to adapt to making this new technology.

Katrina Charles is a BA Media Fellow.

*

References

1. Ko, H. C. et al. Nature 454, 748–753 (2008).

2. Someya, T. Nature 454, 703–704 (2008).

http://www.nature.com/news/2008/080806/full/news.2008.1004.html

Oil Market Update

Clive Maund

support@clivemaund.com

August 10, 2008

We have had the heavy correction in oil predicted in the last Oil Market update which was posted in mid-July, with the pendulum rapidly swinging from extremely overbought to extremely oversold. On the 3-year chart for Light Crude, the correction looks quite normal. Before it set in oil was wildly overbought with a huge gap having opened up between its 50 and 200-day moving averages, this gap being a key factor leading us to conclude that a major correction was imminent. The MACD indicator had also been riding at extreme levels for weeks, which was another important factor calling for a reversal. Now this indicator has plunged to its lowest level for years as speculators have rushed for the exits, a level that equals the most extreme reading attained during the uptrend, showing that selling has been way overdone - and although the price could retreat a little further short-term, we are now probably very close to a reversal. On the chart we can see that the price is now approaching the strong support at the lower boundary of the uptrend channel in force from early 2007 and also support in the vicinity of its 200-day moving average. These important supporting factors coupled with the deeply oversold condition should lead to a reversal to the upside soon.

On the 6-month chart for Light Crude we can see that while the price pattern has certainly not signalled that the downtrend is over yet, there are factors in play suggesting that it does not have much further to run, and should end soon, if not immediately. There are 2 things in particular that are worthy of note. One is that the downtrend appears to be taking the form of a bullish Falling Wedge. It is too early to be sure at this point, but if the tentative lower channel line drawn on the chart is correct it is showing a gradual diminution of selling pressure over time, which would seem to be the case, for as we can see the MACD histogram is creeping back towards the zero line, despite the continuing losses. It is thus reasonable to conclude, given that oil stocks, which appear to have bottomed, tend to do so ahead of oil itself, that although oil may drop a little more more it is close to bottoming and is likely to do so in the $110 area.

Despite the fact that they have been and are making mountains of money, oil stocks have dropped substantially over the past several months. This is because the market is always discounting future (foreseeable) developments 6 to 9 months down the road, and, unsettled by the heavy declines in the broad stockmarket earlier in the year, investors have been looking ahead to somewhat lower profits resulting from recession and lower oil prices. The fact remains however that oil companies have been making gargantuan windfall profits out of the recent boom in oil prices, and have built up massive "war chests", which will scarely be dented by backhanders to their pals in the Republican Party. Bearing this in mind, it should be clear that with oil having now corrected back heavily as oil stocks had anticipated, the stage is set for a new uptrend to develop. On the 3-year chart we can see how the OIX index has dropped back across its broad long-term uptrend channel to its lower boundary where it is logical and reasonable to expect it to find support and turn up again. That it is finding support towards this uptrend line is evident from the fact that the rate of decline is now slowing. The overbought extreme attained in May has been followed by an even more oversold extreme just a couple of weeks ago, as shown by the MACD indicator at the bottom of the chart.

There are many interesting features to observe on the 6-month chart for the OIX oil index. After a minor reaction late in February and the first part of March, a strong "bull hammer" marked the reversal that led to the following powerful uptrend, which terminated with a fine example of a bearish "shooting star" candlestick in May. The index then formed an intermediate top above its 50-day moving average before breaking down below a clear line of support (now resistance) at the 920 level leading to the recent plunge. It became critically oversold by late last month, as shown by the extremely low MACD reading, leading to a slowing in the rate of decline. Now it appears to be marking out an intermediate base area with downside momentum clearly having slowed considerably. A bullish Falling Wedge pattern is now evident on the chart, although it is too early to be sure of this, but if this is what it is we can expect a strong reversal to the upside soon, and recent candlestick action is decidedly bullish, with several long lower shadows demonstrating the underlying demand snapping at oil stocks when they dip - doubtless only too happy to buy from the clowns who are bailing out, probably with heavy losses. On Friday a bullish hammer candlestick appeared on the chart, the low of which may be very close to the bottom for this cycle.

Oil stocks are believed to have bottomed, or to be very close to bottoming, and to be presaging an imminent intermediate low in oil, likely in the $110 area.

On Friday on www.clivemaund.com we liquidated our Proshares Ultrashort Oil & Gas ETF units (code DUG), having bought them a week before oil topped out.

Clive Maund

support@clivemaund.com

August 09, 2008

Clive Maund is an English technical analyst, holding a diploma from the Society of Technical Analysts, Cambridge and lives in The Lake District, Chile.

Visit his subscription website at clivemaund.com .[You can subscribe here].

Clivemaund.com is dedicated to serious investors and traders in the precious metals and energy sectors. I offer my no nonsense, premium analysis to subscribers. Our project is 100% subscriber supported. We take no advertising or incentives from the companies we cover. If you are serious about making some real profits, this site is for you! Happy trading.

No responsibility can be accepted for losses that may result as a consequence of trading on the basis of this analysis.

Copyright © 2003-2008 CliveMaund. All Rights Reserved.

Carbon Dioxide: Good for Something?

Jessica Marshall, Discovery News

April 10, 2008 -- The carbon in oil and coal is used to make many useful things: fuel, plastics, paints, detergents, pharmaceuticals...the list is long. Unfortunately, most of that carbon -- especially from fuel -- ends up in the atmosphere as good-for-nothing, climate-change-inducing carbon dioxide.

But is it really good for nothing? Maybe not for long. Chemists are developing strategies to put CO2 to use making products normally derived from oil. These approaches could take a bite out of power plant CO2 emissions that would normally go into the atmosphere.

For instance, CO2 could take the place of the poisonous gas phosgene in production of certain plastics, according to findings released this week at a meeting of the American Chemical Society by Toshiyasu Sakakura of the National Institute of Advanced Industrial Science and Technology in Tsukuba, Japan.

Sakakura and colleagues developed a new catalyst that efficiently converts CO2 and methanol into a plastic precursor whose synthesis currently requires phosgene. Phosgene, which is derived from petroleum, is particularly nasty. It was used as a chemical warfare agent in World War I.

"It does not produce much waste," Sakakura said of the new process. "The waste is just water, so it is simple and clean."

Bringing New Meaning to the Word "Recycled"

Sakakura and other researchers have targeted other processes for making plastics, which are, essentially, long chains of carbon. CO2 can react with a class of chemicals called epoxides to make polycarbonate, the tough, clear plastic used in compact discs, eyeglass lenses, bulletproof windows and more.

Using CO2 in such processes is a challenge, said Thomas Müller of the CAT Catalytic Center in Aachen, Germany, who also presented at the meeting, because it is relatively inert and "low in energy." After all, carbon dioxide is what is left after the energy stored in the chemical bonds of the molecules that make up fuel has been released by combustion.

This means that reactions using CO2 require something else, like methane or an epoxide, to act as a source of energy in creating a stable, higher-energy product like plastics.

"It would be great if you could polymerize CO2 directly," said chemist Geoffrey Coates of Cornell University in Ithaca, NY, referring to the process of linking carbon atoms together. "But you would defeat the laws of nature."

These co-reactants generally come from fossil fuel sources, so these CO2-based processes generally decrease, but do not eliminate, the need for petroleum. Coates has made polymers, for instance, that are 30 to 50 percent CO2.

Down With Oil

To eliminate the petroleum source altogether, he used an epoxide derived from the oil in orange peels as a co-reactant to make plastic with CO2. Coates is working to commercialize CO2-based plastics with a range of properties, and other "environmentally benign" polymers, through a company called Novamer.

Even if fossil fuel contributions were eliminated from all of these reactions, experts agree that making plastics from CO2 generated in power plants or other industrial processes will not fix the climate change problem.

"When we keep burning as much fossil fuel as we are, it's going to be impossible for one chemical use to negate all of the CO2 that's made on a daily basis," Coates said. Production of all polymers worldwide amounted to about 260 million tons in 2005, according to Müller, while CO2 emissions added up to more than 100 times more.

"However, if you're using CO2 instead of a petrochemical source, then you are prolonging the lifetime of the petrochemical resources that we have," said Christopher Rayner, of the University of Leeds in the U.K., who is working to make formic acid, which can be used in fuel cells, from CO2 and hydrogen.

Doing As the Plants Do

Another approach to providing the energy needed to turn the carbon in CO2 into a more useful form is with electrochemical cells -- which use electricity and a catalyst to convert CO2 into carbon monoxide and, eventually, into a fuel such as methanol. Daniel Dubois of the Pacific Northwest National Laboratory, another meeting presenter, is tackling this problem.

"Part of the problem is, where do you get your electricity?" Rayner said of this method. Unless it comes from a renewable source, the electricity supply creates CO2 emissions that undo the gains in absorbing CO2 in the electrochemical process.

There is, of course, a precedent for stripping CO2 out of the atmosphere on a large scale and converting it into all sorts of useful molecules: Plants do it all day by harnessing the sun's energy through photosynthesis and using it to build their cells and tissues.

Some researchers are trying to turn CO2 back into fuels using methods similar in principle to photosynthesis -- using the energy in light to transform carbon dioxide into higher-energy molecules.

Others are trying to capitalize directly on plants' ability to convert CO2 into potentially valuable molecules, including sources of fuel. It will be a challenge to identify which compounds can economically be made this way, Müller pointed out, also noting that only one percent of the sun's energy is converted by photosynthesis into plant tissue. He hopes synthetic approaches can be more efficient.

"Of course we can learn from nature," he added, "We'd like to copy them."

http://dsc.discovery.com/news/2008/04/10/carbon-dioxide-plastic-print.html

North Pole webcams

http://psc.apl.washington.edu/northpole/index.html

North Pole webcams

http://psc.apl.washington.edu/northpole/index.html

Johnny Hollow - Superhero - An Iron Man Music Video

Madonna - Give It 2 Me

O.A.R. - Shattered

Divine Brown - Lay It On The Line

Tokyo Police Club - Tessellate

lol

Ida Maria - I Like You So Much Better When You're Naked

Jakalope - Pretty Life

Nightwish - The Phantom Of The Opera

Nightwish - Over the Hills and Far Away (End of An Era)

Kings 0f Leon - Sex on Fire

Kings of Leon - Fans (Live) Glastonbury 2008

Hedley - Old School

A Fine Frenzy - Almost Lover

World Energy and Population

Trends 2007 to 2100

by Paul Chefurka,

Original text: http://www.paulchefurka.ca/WEAP/WEAP.html

October 2007

Remarks: The causal link between energy and population in this paper is essentially intuitive. While it seems reasonable, "proving" that causality is difficult. However, if the linkage is valid, the consequences for humanity of any overall decline in energy supplies are too dire to be ignored.

For conclusions on medium term global energy supplies you can consult the later article "World Energy to 2050". http://www.paulchefurka.ca/WEAP2/WEAP2.html

Abstract

Throughout history, the expansion of human population has been supported by a steady growth in our use of energy. Our present industrial civilization wholly depends on access to a very large amount of energy of various types. If the availability of this energy were to decline significantly it could have serious repercussions for civilization and the human population it supports. This paper shows production models for the various energy sources we use and projections of their likely evolution out to the year 2100. The full picture is then translated into a population model based on an estimate of changing average per-capita energy consumption over the century. Finally, the impact of ecological damage is added to the model to arrive at a final population estimate.

This model, known as the "World Energy and Population" model, or WEAP, suggests that the world's population will decline significantly over the course of the century.

Introduction

During the global industrialization, the level of human population has been closely related to the amount of energy we have used. Over the last forty years, the per capita energy consumption has averaged about 1.5 tonnes of oil equivalent (toe) per person per year, rising from a global average of 1.2 toe per person in 1966 to 1.7 toe per person in 2006. As the global energy supply tripled over that time, the population has doubled.

Figure 1 shows the close relationship between global energy consumption, world GDP and global population and implies that an overall increase in the energy supply has supported the increase in population.

Figure 1: World Energy, GDP and Population, 1965 to 2003

Methodology

The analysis in this paper is supported by a model of trends in energy production. The model is based on historical data of actual energy production, connected to projections that are drawn from the thinking of various expert energy analysts as well as my own interpretation of future directions.

The current global energy mix consists of oil (36%), natural gas (24%), coal (28%), nuclear (6%), hydro (6%) and renewable energy such as wind and solar (about 1%). Historical production in each category (except for renewable energy) has been taken from the BP Statistical Review of World Energy 2007. For comparisons between categories I use a standard measure called the tonne of oil equivalent (toe). While this approach doesn't take into account the varying efficiencies of different sources like oil and hydroelectricity, it does provide a well accepted standard for general comparison.

We will first examine each of the energy categories separately. I will define as clearly as possible the factors and parameters I have considered in building its scenario. This will allow you to decide for yourself whether my assumptions seem plausible. We will then combine them into a single global energy projection.

Once the energy picture has been established, we will explore its possible effects on the world population. Then we will incorporate the probable effects of ongoing ecological damage to arrive at a final projection of human numbers over the next century.

Notes

The WEAP model was developed as a simple Excel spreadsheet. The timing of energy-related events and rates of increase or decrease of supply were chosen through careful study of the available literature. In some cases different authors had diverging opinions on these matters. In these cases I have relied on my own analysis and judgment. Models always reflect the opinions of their authors, and it is best to be clear about that from the start. Nevertheless, I have made deliberate efforts throughout to be objective in my choices, to base my projections on observed trends in the present and recent past, and to refrain from wishful thinking at all times.

The WEAP model presents a global expectation of the effects of energy and ecological factors on world population. It does not directly incorporate influences of regional or national differences. Its purpose is to establish a broad conceptual framework within which such regional disparities may be understood.

The analysis is intended solely to clarify a "most likely" future scenario, based purely on the situation as it now exists and will probably unfold. You will not find any suggestions for what we ought to do, or any proposals based on the assumption that we can radically alter the behaviour of people or institutions over the short term. The same goes for new technologies. You will not find any discussion of fusion or hydrogen power, for example.

The Excel spreadsheet containing the data used to assemble the WEAP model is available here.

Energy Component Models

Oil

The oil supply is finite, non-renewable, and subject to effects which will result in a declining production rate in the near future. This situation is popularly known as Peak Oil. The key concept of Peak Oil is that after we have extracted about half the total amount of oil in place the rate of extraction will reach a peak and then begin an irreversible decline.

This happens both for individual oil fields and for larger regions like countries, but for different reasons. In individual oil fields this phenomenon is caused by geological factors inherent to the structure of the oil reservoir. At the national or global level it is caused by logistical factors. When we start producing oil from a region, we usually find and develop the biggest, most accessible oil fields first. As they go into decline and we try to replace the lost production, the available new fields tend to be smaller with lower production rates that don't compensate for the decline of the large fields they are replacing.

Oil fields follow a size distribution consisting of a very few large fields and a great many smaller ones. This distribution is illustrated by the fact that 60% of the world's oil supply is extracted from only 1% of the world's active oil fields. As one of these very large fields plays out it can require the development of hundreds of small fields to replace its production.

The theory behind Peak Oil is widely available on the Internet, and some introductory references are given here, here and here..

Timing

There is much debate over when we should expect global oil production to peak and what the subsequent rate of decline might be. While the rate of decline is still hotly contested, the timing of the peak has become less controversial. Recently a number of very well informed people have declared that the peak has arrived. This brave band includes such people as billionaire investor T. Boone Pickens, energy investment banker Matthew Simmons (author of the book "Twilight in the Desert" that deconstructs the state of the Saudi Arabian oil reserves), retired geologist Ken Deffeyes (a colleague of Peak Oil legend M. King Hubbert) and Dr. Samsam Bakhtiari (a former senior scientist with the National Iranian Oil Company).

My position is in agreement with the luminaries mentioned above, that the peak is happening as I write this (in late 2007). It has been confirmed by the pattern of oil production and oil prices over the last three years. I discovered in the process that crude oil production peaked in May 2005 and has shown no growth since then despite a doubling in price and a dramatic surge in exploration activity.

Decline Rate

The post-peak decline rate is another question. The best guides we have are the performances of oil fields and countries that are known to be already in decline. Unfortunately, those decline rates vary all over the map. The United States, for instance, has been in decline since 1971 and has lost two thirds of its capacity since then, for a decline rate of about 3% per year. On the other hand, the North Sea basin is showing an annual decline around 10%, and the giant Cantarell field in Mexico is losing production at rates approaching 20% per year.

In order to create a realistic decline model for the world's oil, I have chosen to follow the approach of Dr. Bakhtiari in his WOCAP model. He assumes a gradually increasing decline rate over time, starting off very gently and ramping up as the years go by. WOCAP has proven to be fairly accurate so far, and I have adopted a variant of it. The main difference is that my model is a little less aggressive. Where WOCAP predicts that production will fall from 4000 million tonnes of oil per year (Mtoe/yr) now to 2750 Mtoe/yr in 2020, my model doesn't reach that point until 2030. The WEAP model increases from a decline rate of 1% per year in 2015 to a constant rate of 5% per year after 2040. Even such a relatively conservative decline model gives astonishing results over the course of the century, as shown in Figure 2.

Figure 2: Global Oil Production, 1965 to 2100

The Net Export Problem

The graph in Figure 2 shows the aggregate oil production for the world. However, the world is not a uniform place of oil production and consumption. Some countries are net exporters of oil, while some are net importers who buy the exporters' oil on the international market.

In most countries the demand for oil is constantly increasing. In oil exporting nations rising oil prices have stimulated economic growth. This has resulted in a higher domestic demand for oil. While the nation's oil production is increasing this does not pose much of a problem. When the exporting nation's production peaks and begins to decline however, something ominous happens: the amount of oil available for export declines at a faster rate than the production decline. This has become known as the "net oil export problem".

Consider this example. Say an exporting country produces one million barrels per day, and its citizens consume 500,000 barrels per day. This leaves 500,000 barrels for export. Then production declines by 5% per year. After one year their production is 950,000 barrels per day. At the same time, their economy is booming, resulting in an increased demand of 5%. This leads to a consumption of 525,000 barrels per day. That leaves only 425,000 barrels for export, for a 15% decline in exports. A graph over a number of years demonstrates the consequences:

Figure 3: Net Export Example

At the end of 8 years, although the country is still producing over 700,000 barrels per day its exports have dropped to zero. This pattern has already been seen in Indonesia, the UK and the USA, each of whom was once a major oil exporter but is now a net importer.

This effect is already visible on the world oil market. Figure 4 shows a graph of total world exports over the last 5 years. An overlaid trend line (a second order polynomial for those who are interested) shows the pattern: an imminent, rapid drop in the world's net oil exports.

Figure 4: World Net Oil Exports 2002 to 2013

Such changes in exports are very worrisome for importing nations. The USA, for instance, imports about two thirds of its oil requirements. If the oil export market should suddenly begin to dry up as Figure 4 suggests it could, the US would be forced to make some very hard choices. These could include accepting a drastic reduction in industrial activity, GDP and lifestyle, abandoning the international oil market and enter into long-term supply contracts with producing nations, or even military action to secure foreign oil supplies (as may have already been attempted in Iraq).

I am indebted to the work of Jeffrey Brown and his Export Land Model for these insights.

Natural Gas

The supply situation with natural gas is very similar to that of oil. This makes sense because oil and gas come from the same biological source and tend to be found in similar geological formations. Gas and oil wells are drilled using very similar equipment. The differences between them have everything to do with the fact that oil is a viscous liquid while natural gas is, well, a gas.

While oil and gas will both exhibit a production peak, the slope of the post-peak decline for gas will be significantly steeper due to its lower viscosity. To help understand why, imagine two identical balloons, one filled with water and the other with air. If you set them down and let go of their necks, the air-filled balloon will empty much faster than the one filled with water. A gas reservoir works much the same way. When it is pierced by the well, the gas flows out under its own pressure. As the reservoir empties the flow can be kept relatively constant until the gas is gone, then it will suddenly stop.

Gas reservoirs show the same size distribution as oil reservoirs. As with oil, we found and drilled the big ones first. The reservoirs that are coming on-line now are getting progressively smaller, requiring a larger number of wells to be drilled to recover the same volume of gas. For example, the number of gas wells drilled in Canada between 1998 and 2004 went up by 400% (from 4,000 wells in 1998 to 16,000 wells in 2004), while the annual production stayed constant. All this means that the natural gas supply will exhibit a similar bell-shaped curve to what we saw for oil.

One other difference between oil and gas is the nature of their global export markets. Compared to oil, the gas market is quite small. This is due to the difficulty in transporting a gas as opposed to a liquid. While oil can be simply pumped into tankers and back out again, natural gas must first be liquefied (which takes substantial energy), transported in special tankers at low temperature and high pressure, then re-gasified at the destination which requires yet more energy. As a result most of the world's natural gas is shipped by pipeline. This pretty well limits gas to national and continental markets. That has an important implication: if a continent's gas supply runs low it is very difficult to supplement it with gas from somewhere else that is still well-supplied.

The peak of world gas production may not occur until 2025, but two things are sure: we will have even less warning than we had for Peak Oil, and the subsequent decline rates may be shockingly high. For the gas model I have chosen as the peak a plateau from 2025 to 2030. This is followed by a rapid increase in decline to 8% per year by 2050, remaining at a constant 8% per year for the following 50 years. This gives the production curve shown in Figure 5.

Figure 5: Global Natural Gas Production, 1965 to 2100

Coal

Coal is the ugly stepsister of fossil fuels. It has a terrible environmental reputation, going back to its first widespread use in Britain in the 1700s. London's coal-fired "peasoup" fogs were notorious, and damaged the health of hundreds of thousands of people. Nowadays the concern is less about soot and ash than about the carbon dioxide that results from burning coal. Weight for weight, coal produces more CO2 than either oil or gas. From an energy production standpoint coal has the advantage of very great abundance. Of course this abundance is a huge negative when considered from the perspective of global warming.

Most coal today is used to generate electricity. As economies grow, so does their demand for electricity, and if electricity is used to replace some of the energy lost due to the decline of oil and natural gas, this will put yet more upward pressure on the demand for coal. At the moment China is installing two to three new coal-fired power plants per week, and has plans to continue at this pace for at least the next decade.

Just as we saw with oil and gas, coal will exhibit an energy peak and decline. One factor in this is that we have in the past concentrated on finding and using the highest grade of coal, anthracite. Much of what remains consists of lower grade bituminous and lignite. These grades of coal produce less energy when burned, and require the mining of ever more coal to get the same amount of energy.

The Energy Watch Group has conducted an extensive analysis of coal use over the next century, and I have adopted their "best case" conclusions as a starting point for this model. The model projects a continued rise in the use of coal out to a peak in 2025. As global warming begins to have serious effects there will be mounting pressure to reduce coal use, resulting in a slightly more aggressive decline slope than the one projected by the Energy Watch Group. Due to its abundance and our need to replace some of the energy lost from the depletion of oil and gas, the decline in coal use will not be as dramatic as seen with those fossil fuels. The model has the annual decline in coal use increasing evenly from 0% in 2025 to a steady 5% annual decline in 2100. These assumptions give the curve shown in Figure 6.

Figure 6: Global Coal Production, 1965 to 2100

Of course this use of coal carries with it the threat of increased global warming due to the continued production of CO2. Many hopeful words have been written about the possibility of alleviating this worry by implementing Carbon Capture and Storage. CCS usually involves the capture and compression of CO2 from power plant exhaust, which is then pumped into played-out gas fields for long term storage. This technology is still in the experimental stage, and there is much skepticism surrounding the security of storing such enormous quantities of CO2 in porous rock strata. Such plans play little part in this analysis, although later when we discuss the intersection of ecological degradation with declining energy I will assume that little has been done compared to the scale of global CO2 generation.

Nuclear

The graph in Figure 7 is the result of a data synthesis and a bit of projection. I started with a table of reactor ages from the IAEA (reprinted in a presentation to the Association for the Study of Peak Oil and Gas), the table of historical nuclear power production numbers from the BP Statistical Review of World Energy 200 and a table from the Uranium Information Centre showing the number of reactors that are installed, under construction, planned or proposed worldwide.

The interesting thing about the table of reactor ages is that it shows that the vast majority of them (361 out of 439 or 82% to be precise) are between 17 and 40 years old. The number of reactors at each age varies of course, but the average number of reactors in each year is about 17. The number actually goes over 30 in a couple of years.

Two realizations form the basis for my model of nuclear power. The first is that since reactors have a finite lifespan averaging around 40 years, a lot of the world's reactors are rapidly approaching the end of their useful life. The second is that the replacement rate inferred from the UIC planning table is only about three to four reactors per year for at least the next ten years, and probably the next twenty.

These two facts mean that within the next twenty years we will have retired over 300 reactors, but will have built only 60. So by 2030 we will have seen a net loss of 240 or more reactors: over half the present stock. Since these reactors are all broadly similar in size (a bit less than 1 GW on average) that means we can calculate the approximate world generating capacity at any moment in time, with reasonable accuracy out to 2030 or so.

The model takes a generous interpretation of the available data. It assumes we will build 3 GW of nuclear capacity per year for the next ten years (about what is under construction now), 4.5 GW per year for the subsequent ten years (these are the reactors in the planning stages that will probably end up being built), and 6 GW/year for the 20 years following that from the reactors that have been proposed. It assumes a rising construction profile because I think we will start to get desperate for power in about 20 years - this is the reason reactor completions double over that period compared to today.

Figure 7: Global Nuclear Production, 1965 to 2100

The drop in capacity between now and 2030 is the result of new construction not keeping pace with the rapid decommissioning of large numbers of old reactors. The rise after 2030 comes from my prediction that we will double the pace of reactor construction in about 2025 when the energy situation starts to become visibly desperate and we realize that most of the reactors from the 1970-1990 building boom are out of service. The final decline after 2060 comes from my expectation that we will start losing global industrial capacity in a big way in a few decades due to the decline in oil and natural gas. As a result, by 2060 we won't have the capability we would need to replace all our aging nuclear reactors.

The argument for a peak in nuclear capacity in 2010 and the subsequent drop is very similar to the logistical considerations behind Peak Oil - the big pool of reactors is about to be exhausted, and we're not building enough replacements. In fact, to stay even with the rate of decommissioning of our current reactor base we would need to build 17 new reactors a year (more than 5 times the number that are now on the books) forever. That seems very unlikely given the capital, regulatory and public relations environments that the nuclear industry is now operating in.

As an aside, the drop in generating capacity after 2010 means that any concerns about outstripping the supply of mined uranium (currently about 50,000 tonnes per year worldwide) are avoided altogether.

Hydro

If coal is the ugly stepsister, hydro is one of the fairy godmothers of the energy story. Environmentally speaking it's relatively clean, if perhaps not quite as clean as once thought. It has the ability to supply large amounts of electricity quite consistently. The technology is well understood, universally available and not too technically demanding (at least compared to nuclear power). Dams and generators last a long time.

It has its share of problems, though they tend to be quite localized. Destruction of habitat due to flooding, the release of CO2 and methane from flooded vegetation, and the disruption of river flows are the primary issues. In terms of further development the main obstacle is that in many places the best hydro sites are already being used.

Nevertheless, it is an attractive energy source. Development will probably continue in the future at a similar pace as in the past, at least until loss of technological capacity or demand makes further development moot.

In order to project the growth rate of hydro power, I used a second order polynomial curve fitted to the production history of the past 40 years. Using such a projection assumes that future development will look very much like the past, at least until an external influence alters the course of events. The projection is shown in Figure 8. One thing that gives confidence in the reliability of the projection is the high correlation of the chosen curve to the actual data, as shown in the R-squared value of .994 (the closer to 1.0 the better the fit).

Figure 8: Projected Hydro Production

The model for hydro power shown in Figure 9 has capacity growing to about double its current level by 2060. It then declines again to the current level by 2100. The decline in the second half of the century is ascribed to a general loss of global industrial capacity and a reduction in water flows due to global warming. These are the external influences mentioned above.

Figure 9: Global Hydro Production, 1965 to 2100

Renewable Energy

Renewable energy includes such sources as wind, photovoltaic and thermal solar, tidal and wave power etc. Assessing their probable contribution to the future energy mix is one of the more difficult balancing acts encountered in the construction of this model. The whole renewable energy industry is still in its infancy. At the moment, therefore, it shows little impact but enormous promise. While the global contribution is still minor (at the moment renewable technologies supply less than 1% of the world's total energy needs) its growth rate is exceptional. Wind power, for example, has experienced annual growth rates of 30% over the last decade.